Our representation uses a two-dimensional array of images to form a four-dimensional light field of any given scene. From this light field, we can compute novel new views of the captured scene with greater flexibility than a real camera and higher quality than a three-dimensional computer graphics model. Our work addresses the following problems:

- Light Field Acquisition Devices

- Light Field Processing and Rendering

- Light Field Viewing Algorithms

- Light Field Display Devices

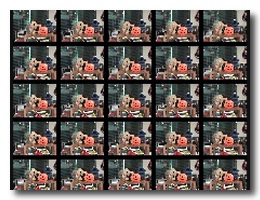

The ultimate goal of this project is to construct a randomly accessible two-dimensional camera array capable of synthesizing novel views of dynamic scenes.

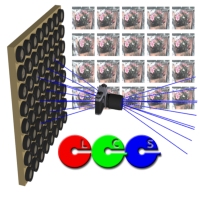

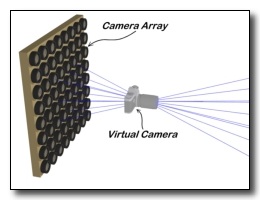

We are designing a two-dimensional

camera array for acquiring and processing light fields in real time.

In fact, the entire camera array can be regarded as a dynamic light field

data structure. The pixels of each camera in the array are randomly accessible

by the host computer system, where the array is mapped as a block of memory.

These dynamic light fields are accessed "on-the-fly" to generate the

desired images. The memory-like interface used by our camera array significantly

reduces latency in the image generation process and makes the most effective use

of available bandwidth. The camera array will employ a modular design consisting of a motherboard,

sensor pods that support a range of imagers, and a PCI interface to the

host, optimized for high throughput.

We are designing a two-dimensional

camera array for acquiring and processing light fields in real time.

In fact, the entire camera array can be regarded as a dynamic light field

data structure. The pixels of each camera in the array are randomly accessible

by the host computer system, where the array is mapped as a block of memory.

These dynamic light fields are accessed "on-the-fly" to generate the

desired images. The memory-like interface used by our camera array significantly

reduces latency in the image generation process and makes the most effective use

of available bandwidth. The camera array will employ a modular design consisting of a motherboard,

sensor pods that support a range of imagers, and a PCI interface to the

host, optimized for high throughput.

We are also developing new processing techniques for light fields.

First, we have added the ability to vary the apparent focus and depth-of-field

within a light field using intuitive camera-like controls such as a variable aperture

and focus ring. However, unlike a tradition camera, we allow for more general and flexible focal

surfaces than the typical focal plane. Our techniques are based on the dynamic reparameterization

of the light-field data structure in order to optimize and exhibit greater control over the image

reconstruction process. Our reparameterization methods operate independent of scene geometry;

we do not need to recover actual or approximate geometry of the scene for focusing.

Our techniques also allow for the use of multiple focal surfaces within a single image rendering.

We are also developing new processing techniques for light fields.

First, we have added the ability to vary the apparent focus and depth-of-field

within a light field using intuitive camera-like controls such as a variable aperture

and focus ring. However, unlike a tradition camera, we allow for more general and flexible focal

surfaces than the typical focal plane. Our techniques are based on the dynamic reparameterization

of the light-field data structure in order to optimize and exhibit greater control over the image

reconstruction process. Our reparameterization methods operate independent of scene geometry;

we do not need to recover actual or approximate geometry of the scene for focusing.

Our techniques also allow for the use of multiple focal surfaces within a single image rendering.

We are also developing low-cost devices for acquiring light fields.

Not only does this effort provide direct benefits for our research,

but it also provides many new opportunities. The small size and portability

of this system allows us to easily acquire outdoor light fields of natural

scenes, and it is also currently our fastest acquisition device.

We are also developing low-cost devices for acquiring light fields.

Not only does this effort provide direct benefits for our research,

but it also provides many new opportunities. The small size and portability

of this system allows us to easily acquire outdoor light fields of natural

scenes, and it is also currently our fastest acquisition device.

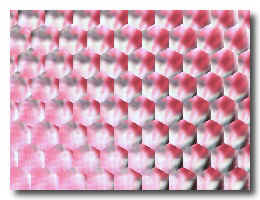

We are also working on passive

autostereoscopic devices for the direct viewing of light fields.

Our display allows multiple viewers to see a light field stereoscopically

from a wide viewing range and it requires no tracking. The key

component of our viewing system is a prefabricated lens-array sheet with

a thickness equal to the focal length of each lenslet.

This allows us to make a

viewer-dependent image with resolution equal to the size of each lenslet in the

array. Currently, we are using a lens array in a hex pattern, where

each lens has a diameter of approx. 0.1" and a focal length of

0.12". Under the entire sheet, we place a large composite image of

smaller hexagonal image. Each small image is a picture of a light

field taken from some point in space. When viewed under the lenses,

a different pixel in the small image is seen for each view

direction. In addition, each lens performs interpolation, due to the

lens optics. When the entire array is viewed, each lens takes on the

role of a single pixel in the final autostereoscopic image.

We are also working on passive

autostereoscopic devices for the direct viewing of light fields.

Our display allows multiple viewers to see a light field stereoscopically

from a wide viewing range and it requires no tracking. The key

component of our viewing system is a prefabricated lens-array sheet with

a thickness equal to the focal length of each lenslet.

This allows us to make a

viewer-dependent image with resolution equal to the size of each lenslet in the

array. Currently, we are using a lens array in a hex pattern, where

each lens has a diameter of approx. 0.1" and a focal length of

0.12". Under the entire sheet, we place a large composite image of

smaller hexagonal image. Each small image is a picture of a light

field taken from some point in space. When viewed under the lenses,

a different pixel in the small image is seen for each view

direction. In addition, each lens performs interpolation, due to the

lens optics. When the entire array is viewed, each lens takes on the

role of a single pixel in the final autostereoscopic image.

"Light Fields on the Cheap" Progress PowerPoint Presentation

"Light Fields on the Cheap" Progress PowerPoint Presentation